On 28 April 2026, the Judiciary of the State of Querétaro, Mexico, signed the first court rulings drafted with the support of generative artificial intelligence in Mexico: an uncontested divorce in the Sixth Family Court and a commercial enforcement proceeding in the Ninth Civil Court.1 The system is called SON-IA, runs on a server owned by the court itself, and uses a Large Language Model (LLM) to structure rulings from electronic case files.2 The presiding magistrate framed it as an unprecedented event in Mexico and Latin America.3

And unprecedented it is, in Mexico. But the public conversation around it is focused on the wrong question.

What everyone is debating

Coverage from La Jornada4 to Foro Jurídico5, including El Imparcial6 and local outlets, has centred on two themes: data security, and the promise that AI does not replace the judge. Quite a story. On the first point, Querétaro chose to host the system on its own server, with encryption and no dependency on commercial cloud platforms.7 On the second, the Lineamientos para el uso responsable de IA (Guidelines for the responsible use of AI), published in La Sombra de Arteaga on 24 October 2025, establish that every assisted ruling must be reviewed and validated by a judge, with a mandatory legend certifying it.8

Both topics are relevant. But neither touches the underlying problem.

What no one is asking

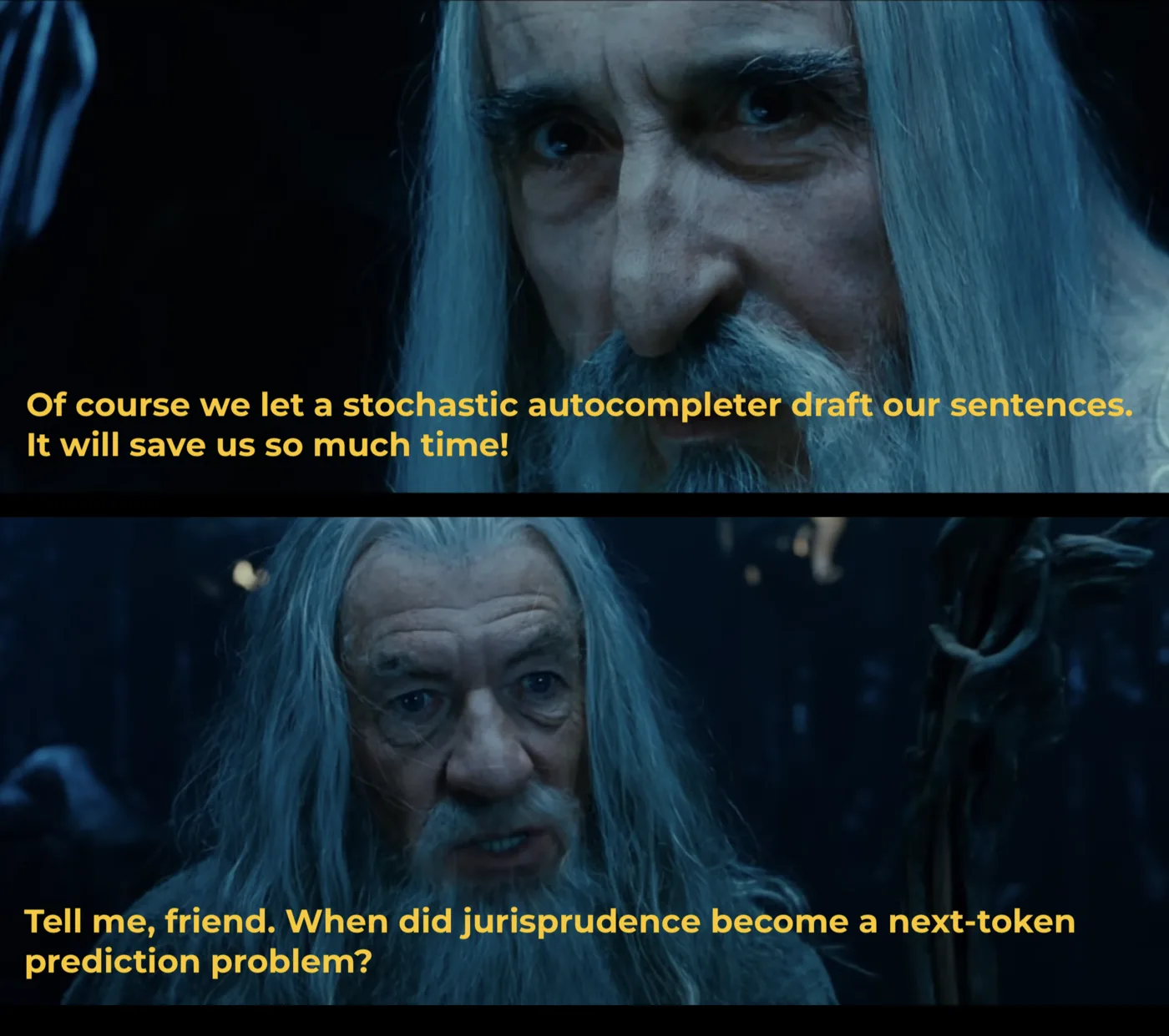

An LLM does not reason. It predicts which sequence of tokens is statistically most likely given a context. I like to keep saying this even when it annoys some people: it is a stochastic parrot. Literally. I reject the emergent capabilities narrative that Silicon Valley disciples repeat past the point of saturation. When you ask an AI to structure a ruling, you are not applying law to facts. You are generating text that resembles what a ruling looks like in a training corpus. The difference is invisible in the output… until it is not. Because the very nature of the operation makes it an epistemic error, which necessarily produces an erosion of justice.

Jurisprudence in the classical, Roman sense — the one Mexico inherited — is iuris prudentia: the prudence of law, the reasoned judgement of what is just in a concrete case. It involves interpretation of the norm, evaluation of evidence, weighing of principles in tension, a legal syllogism that connects major premise (norm), minor premise (facts), and conclusion (decision), in a movement and an experience of a shared social reality, by a flesh-and-blood person, who signs and understands what it could feel like to stand on the other side of the mirror. Each of those steps is an act of judgement, which is fundamentally different from an act of semantic prediction.

When that act of judgement is replaced by a statistical prediction of the next token, what you get is a ruling that sounds right but was generated rather than reasoned, which violates due process. And the judge who reviews and validates it is not verifying a reasoning, because there was no reasoning, but rather verifying whether the text looks reasonable to them. Which is exactly what an LLM is optimised to produce: text that looks reasonable. The rhetorical circularity here would be aesthetic if it weren’t so dramatic that it occurs in the exercise of a sovereign function of the State.

The judicial function consists in the exercise of judgement on a concrete case. A token predictor exercises something radically different: it produces the most plausible answer, not the most just. So what?, I’ll be told — if the result is indistinguishable from the simulation, functionalism tells us everything is fine!

No. Functionalism does not tell us everything is fine.

First, even the father of functionalism would not endorse this: Hilary Putnam formulated the thesis in 19679 and abandoned it himself twenty years later,10 concluding that functional states cannot account for the central properties of mental states (belief, reasoning, rationality), that is, the capacity to form and possess reasons, not merely to produce outputs that look like reasons.

Second, even if we accepted the functionalist premise, an LLM does not satisfy it: Putnam described mental states as states of a complete system that causally mediate between inputs, persistent internal states, and outputs over time. A transformer has a prompt, a forward pass, and tokens. As an Aeon essay about to celebrate its tenth anniversary puts it, our brains do not work like computers!11 Get over it. There are no internal states mediating between multiple interactions. There is no systemic integration. There is no body.

Third, neurologist Antonio Damasio empirically demonstrated, with patients suffering ventromedial lesions, that there is no pure reasoning detached from somatic markers: even for formal logic, legal analysis, or strategy, humans need emotional signals to assess relevance, prioritise options, and detect significant contradictions.12 An LLM produces outputs that we evaluate as logically correct, but we are only retrieving statistical patterns. In other words: human reasoning involves evaluating significance. With AI, we manipulate symbols. And some, impressed by that, mistake the map for the territory. Mistaking the functional utility of an autocompleter for the cognitive process of judging is, precisely, the category mistake on which SON-IA is built — along with many other uses of degenerative AI in justice.13 I develop this argument further, together with Ilsse C. Torres Ortega, in my most recent research paper — available alongside the rest of my academic work in my publications.

What we know (and don’t know) about SON-IA

Let’s be fair: the Querétaro Judiciary has done something no other judicial institution in Mexico has dared to do, and has done it with more structure than the media coverage allows one to see.14 It published ethical guidelines before implementation,8 ran a six-month pilot in two specific courts,15 defined a scope limited to low-complexity matters (no-fault divorce, non-contentious proceedings, summary judgment in lieu of complaint),16 and established mandatory human supervision as a normative condition. None of that is trivial.

Yet several fundamental questions have no public answer:

What problem is being solved, and is generative AI the right tool for it? Because I have seen no sign, anywhere, that alternative technologies, low-tech solutions, or even more robust technologies such as bespoke neuro-symbolic AI algorithms were considered.

What model is being used? No official communication, no court bulletin from the past six months, and no interview with the presiding magistrate identifies the underlying language model.17 We know it is not ChatGPT, Gemini, or Claude — explicitly forbidden by the institution.7 We know it runs on a local server. But it has not been disclosed whether it is Llama, Mistral, Qwen, or some other open-source model. Knowing which model produces the rulings is a minimum condition for evaluating its biases. Whether there is a violation of the very Guidelines for the responsible use of AI on which the pilot leans is a real question.

What does “six months of training” mean? The presiding magistrate stated: “entrenamos a nuestro robot durante seis meses para estar siendo especializado en derecho” (“we trained our robot for six months to be specialised in law”).4 But the presiding judge of the Sixth Family Court describes the process in more concrete terms: “hemos desarrollado prompts para tres tipos de resoluciones” (“we have developed prompts for three types of rulings”).7 There is an enormous technical gap between training a model (modifying its weights with new data) and developing prompts (writing instructions for an existing model). Public documentation does not allow us to tell which of the two actually happened.

Who evaluated the quality? The presiding magistrate stated that, after pilot trials, the system can issue documents “con alta pulcritud” (“with high cleanliness”)6 and on another occasion claimed they do so “con perfección sin sesgos” (“with perfection without biases”).4 These claims are not backed by any independent published evaluation. There are no benchmarks, no blind comparison between human and assisted rulings, no third-party audit. The guidelines themselves assign monitoring to the IT Department — that is, to the same team that built the system.8 Evaluating your own product is just marketing. And any data science undergraduate can smell the trick when someone declares there are no biases or hallucinations in a generative AI model.

The problem the local server does not solve

Querétaro’s decision to operate with its own infrastructure, rather than rely on a US cloud provider, makes sense from a data-sovereignty perspective. A branch of the State should not depend contractually on OpenAI, AWS, or Google to safeguard the judicial files of its citizens. That is well handled.

But data sovereignty is not cognitive sovereignty.

The server is in Querétaro. But the model that reasons over the case files was trained (whichever it is) predominantly on English text and on Anglo-Saxon legal corpora. Current LLMs, even open-source ones, absorb reasoning patterns from the common law: binding precedent, adversarial system, the reasonableness standard, stare decisis. The Mexican legal system operates on a different logic: codification, principle of legality, precedent by reiteration, the judge as applier of the norm.

When SON-IA is used to frame the dispute or weigh evidence, with what patterns does it do so? Does it prioritise precedent over norm? Does it structure the legal syllogism the way a judge trained in the Roman tradition would, or the way a model that has read millions of US federal rulings would? These questions are not solved by an air-cooled in-house server (an argument that was offered to justify how thoroughly the matter had been thought through).4

And there is an aggravating factor: this bias does not manifest as a factual error the judge could detect. It is not a wrong date or a misquoted statute. It is a way of structuring the argument, of weighing the elements, of organising the decision, of priorizing legal sources. It is invisible precisely because the LLM is optimised to produce text that looks correct. But the risk lies in the abandonment of the function of judging, because writing a ruling is deciding, first-hand, what it must contain and why. And I believe this is the first time in history that a judge — whose function is to write the law or perfect it — accepts to let go of the pen and become a reviewer of something written automatically. In the name of efficiency. With the question of whether writing the ruling was really the bottleneck of judicial efficiency. But that is a question for another article.

The slippery slope

The scandal is not in the divorces. It is in what comes next. The presiding magistrate has already announced extension to other courts, and explicitly mentioned criminal matters.7 The logic is predictable: if it works for divorces, why not for child support? If it works for promissory notes, why not for complex disputes? Each apparent success at a low-complexity rung becomes justification for climbing to the next. Especially because “AI gets better”.

And here enters the mirage of scaling laws: the idea that models, as they grow in parameters, become progressively more intelligent. Outputs do improve, yes: they become harder to distinguish from human text. But improving next-token prediction is not acquiring a capacity for judgement. The distance between simulation and judgement does not close with more parameters, because it is one of nature, not of degree.

The pressure is real, but the response to an overloaded judicial system is not to automate judgement. It is to automate everything that surrounds judgement (search, transcription, document management, anonymisation — and that last one is precisely what they are tackling in Querétaro with SON-IA), without delegating the act of judging to a process that, by definition, does not judge.

What the debate should include

SON-IA coverage oscillates between two poles: uncritical enthusiasm and generic fear. Neither of them addresses the issues that I consider important, which I will now explain in detail.

For State institutions wishing to adopt AI in the judicial function

The questions are epistemic in nature: is statistical token prediction compatible with the constitutional duty to give reasons for decisions? Can a litigant challenge the reasoning of a ruling that… was not reasoned? Because obviously, there will be rulings that don’t even pass through the filter of review by a judge who, for efficiency or any other reason, will simply stamp them reviewed. I’ll be told that judges already delegate to clerks, already use boilerplate without reviewing, already make mistakes — so what’s wrong with delegating to a machine?

Of course, it all depends on how the model is used. But in its basic generative form, the problem is that it changes the nature of the problem. A clerk drafting a poorly reasoned ruling is reasoning badly: they identified facts, attempted to apply a norm, built a deficient syllogism. Their error is correctable because it is an error of judgement. Using an LLM to generate a plausible ruling is not reasoning at all. Its output is a statistical prediction disguised as judgement. And when the judge signs without reviewing (which, granted, already happens), in one case they delegated their judgement to another human being who at least exercised it. In the other, they delegated it to a process that by definition cannot exercise it. The problem does not improve with AI: it gets whitewashed and hidden behind techno-solutionism. Precisely because the LLM-generated ruling looks neater, more structured, more correct than the exhausted clerk’s, that is exactly what makes it more dangerous. A bored, hungry, or sleepy judge is still a judge. An autocompleter that does not get hungry continues not to be a judge. Hunger and sleep are not defects of human reasoning: they are conditions of human reasoning. If the answer to judicial fatigue is to automate the judicial function, the question is not technological: it is about how many judges and under what working conditions the system operates. But that question is uncomfortable, costly, and political. A 17-million-peso server is more photogenic than a budget reform.

For the Mexican public conversation on AI and law

What is urgent is not to celebrate but to demand transparency: which model, which data, which evaluation, which procurement process, which independent audit mechanism, what right does the litigant have to know that their ruling was assisted by a text autocompleter and to challenge that assistance. Because automation bias exists, and is not going anywhere. I find it particularly disturbing that the entire conversation revolves around the technical, while very little is said about the organisational. Beyond the judge’s duty to review and to state that they did so, what does this process actually look like, and to what extent does the judge still determine the solution of the case? Because there lies the dilemma without exit:

- If the judge truly reviews these simple cases — that is, re-reads the file, verifies the facts, reconstructs the legal syllogism, evaluates whether the weighing of evidence is correct, confirms the applicable norm — then they have done all the work SON-IA was supposed to spare them. The efficiency vanishes. The “acuerdo cada minuto y medio” (“a ruling every minute and a half”)4 becomes a ruling every minute and a half plus an hour of real review. And SON-IA reduces to an over-pretentious word processor.

- If the judge does not really review — the realistic scenario, the one that justifies the investment, the one that produces the announced reduction of up to 60% in time — then what determines the content of the ruling is not the judge’s judgement but a model’s statistical prediction. And the mandatory legend “reviewed and validated by a judge” becomes an institutional fiction.

These questions touch directly on others, more fundamental: why and for what purpose is this implementation being done? What model of society is being pushed?

The server is in Querétaro

Querétaro did something valuable: it demonstrated that a Mexican state judiciary can implement generative AI with prior guidelines, a controlled pilot, and mandatory human supervision. That is more than most institutions in the country have managed.

But the question no one asked before signing those two rulings is the most important of all: when did we decide that justice is a problem of next-token prediction? Who authorised that premise? And where is the iuris prudentia — the prudence of law and the human judgement of what is just — when we delegate it to a model that does not judge, but predicts the next word?

The server is in Querétaro. The data does not leave the court. The encryption is robust. The guidelines are serious.

But the model robbed us of reason.

Footnotes

-

Judiciary of the State of Querétaro, press release, 28 April 2026 [in Spanish]. Available at: https://www.poderjudicialqro.gob.mx/noticias/despliegaBol.php?id=56519 ↩

-

Judiciary of the State of Querétaro, press release, 20 January 2026 [in Spanish]. Available at: https://www.poderjudicialqro.gob.mx/noticias/despliegaBol.php?id=56581 (reference to the formal launch of SON-IA). ↩

-

“Querétaro, primer Poder Judicial en México en emitir sentencias con apoyo de inteligencia artificial”, Foro Jurídico, 28 April 2026 [in Spanish]. Available at: https://forojuridico.mx/queretaro-primer-poder-judicial-en-mexico-en-emitir-sentencias-con-apoyo-de-inteligencia-artificial/ ↩

-

“Querétaro utiliza IA para emitir sentencias por primera vez en México”, La Jornada, 29 April 2026 [in Spanish]. Available at: https://www.jornada.com.mx/noticia/2026/04/29/estados/queretaro-utiliza-ia-para-emitir-sentencias-por-primera-vez-en-mexico ↩ ↩2 ↩3 ↩4 ↩5

-

“Por primera vez en México una inteligencia artificial ayudó a redactar dos sentencias judiciales en Querétaro”, El Imparcial, 30 April 2026 [in Spanish]. Available at: https://www.elimparcial.com/mexico/2026/04/30/por-primera-vez-en-mexico-una-inteligencia-artificial-ayudo-a-redactar-dos-sentencias-judiciales-en-queretaro-y-el-experimento-abrio-un-debate-urgente-sobre-que-tanto-puede-acelerar-la-justicia-sin-poner-en-manos-de-una-maquina-la-decision-final-de-jueces-y-juezas/ ↩ ↩2

-

“Poder Judicial de Querétaro firma primera sentencia con apoyo de Inteligencia Artificial”, Diario de Querétaro, 28 April 2026 [in Spanish]. Available at: https://oem.com.mx/diariodequeretaro/local/poder-judicial-de-queretaro-firma-primera-sentencia-con-apoyo-de-inteligencia-artificial-29715242 ↩ ↩2 ↩3 ↩4

-

Guidelines for the responsible, ethical and secure use of Artificial Intelligence in the administration of justice, Council of the Judiciary of the PJEQ, published in La Sombra de Arteaga, 24 October 2025 [in Spanish]. Available at: https://www.poderjudicialqro.gob.mx/biblio/leeDoc.php?cual=158007®lamentos=1 ↩ ↩2 ↩3

-

Putnam, H. (1967). The nature of mental states. In W. H. Capitan & D. D. Merrill (Eds.), Art, mind, and religion (pp. 37–48). University of Pittsburgh Press. ↩

-

Putnam, H. (1988). Representation and reality. MIT Press. ↩

-

Epstein, R. (18 May 2016). The empty brain. Aeon. https://aeon.co/essays/your-brain-does-not-process-information-and-it-is-not-a-computer ↩

-

Damasio, A. (2003). Looking for Spinoza: Joy, Sorrow and the Feeling Brain. Harvest. ↩

-

On the insufficiency of functionalism applied to LLMs in the legal context, see Prince Tritto, P. and Torres Ortega, I. C., “Jurists of the Gaps: Large Language Models and the Quiet Erosion of Legal Authority”, Masaryk University Journal of Law and Technology, 2025. Available at: https://journals.muni.cz/mujlt/article/view/39797. ↩

-

“SON-IA: Querétaro activa inteligencia artificial en el Poder Judicial y se coloca a la vanguardia nacional”, Códice Informativo, 20 January 2026 [in Spanish]. Available at: https://codiceinformativo.com/2026/01/son-ia-queretaro-activa-inteligencia-artificial-en-el-poder-judicial-y-se-coloca-a-la-vanguardia-nacional/ ↩

-

“Querétaro dicta primera sentencia con inteligencia artificial en México”, Código Qro, 28 April 2026 [in Spanish]. Available at: https://codigoqro.mx/nota/local/2026/04/28/queretaro-dicta-primera-sentencia-inteligencia-artificial-mexico ↩

-

“IA llega a la justicia en Querétaro con SONIA; Polares digitaliza demandas desde mayo”, Expreso Querétaro, 28 April 2026 [in Spanish]. Available at: https://expresoqueretaro.com/2026/04/28/ia-llega-a-la-justicia-en-queretaro-con-sonia-polares-digitaliza-demandas-desde-mayo/ ↩

-

No official source consulted — PJEQ bulletins, published interviews of the presiding magistrate, Council of the Judiciary guidelines, articles from La Jornada, El Imparcial, Diario de Querétaro, Códice Informativo, Foro Jurídico, Visión Empresarial Querétaro — identifies the name or version of the language model used. Verification carried out between 28 and 30 April 2026. ↩